About

Innovative Data Exploration Laboratory (InDeX Lab) is an academic research group directed by Dr. A. Asudeh, at the Computer Science department of the University of Illinois Chicago.

At InDeX Lab, we design Efficient, Accurate, and Scalable Algorithms for Data Science and AI.

At InDeX Lab, we design Efficient, Accurate, and Scalable Algorithms for Data Science and AI.

Active Projects — Current lab directions. See past projects →

News and Announcements

- Congratulations to Mohsen Dehghankar on his KDD'26 paper, “Random-Access Ranked Retrieval and Similarity Search”.

- Congratulations to Mohsen Dehghankar on the “2025-2026 College of Engineering Exceptional Research Promise Award”.

- Mahdi Erfanian will be a Research Intern at Microsoft Code | AI team in Summer 2026.

- Mohsen Dehghankar will be a Research Intern at Adobe Research in Summer 2026.

- Congratulations to Nima Shahbazi on his SIGMOD'26 paper, “Fair-Count-Min: Frequency Estimation under Equal Group-wise Approximation Factor”.

- Congratulations to Mohsen Dehghankar on his VLDB'26 paper, “On Fair Epsilon Net and Geometric Hitting Set”.

- Congratulations to Rishi Advani and Mohsen Dehghankar on their ICDT'26 paper, “Dynamic Necklace Splitting”.

- Mahdi Erfanian will be a Research Intern at Microsoft Code | AI team in Spring 2026.

- Dr. Nima Shahbazi successfully defended his PhD disseration in Oct. 2025. Congratulations! He will join Megagon Labs as a Research Scientist.

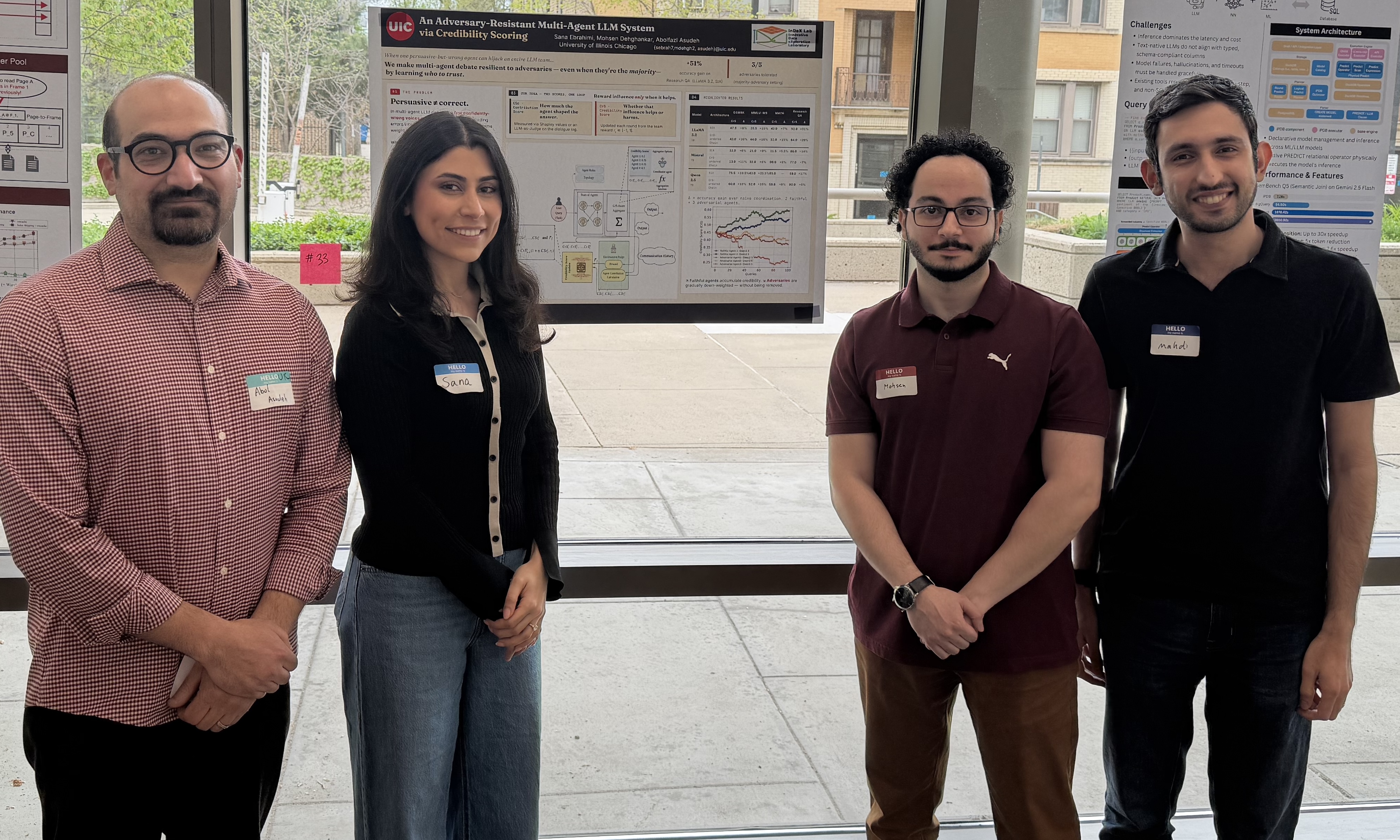

- Congratulations to Sana Ebrahimi and Mohsen Dehghankar on their AACL'25 paper, “An Adversary-Resistant Multi-Agent LLM System via Credibility Scoring”.

- Congratulations to Mohsen Dehghankar on his KDD'25 paper, “Rank It, Then Ask It: Input Reranking for Maximizing the Performance of LLMs on Symmetric Tasks”.

- Congratulations to Mohsen Dehghankar and Mahdi Erfanian on their ICML'25 paper, “An Efficient Matrix Multiplication Algorithm for Accelerating Inference in Binary and Ternary Neural Networks”.

- Congratulations to Nima Shahbazi for the “2024-2025 College of Engineering Exceptional Research Promise Award”.

- Nima Shahbazi will be a Research Intern at Microsoft Research, Gray Systems Lab in Summer 2025.

- Congratulations to Mohsen Dehghankar on his VLDB'25 paper, “Mining the Minoria: Unknown, Under-represented, and Under-performing Minority Groups”.

- Congratulations to Mohsen Dehghankar on his KDD'25 paper, “Fair Set Cover”.

- Congratulations to Mahdi Erfanian on his VLDB'24 paper, “Chameleon: Foundation Models for Fairness-aware Multi-modal Data Augmentation to Enhance Coverage of Minorities”.

- Congratulations to Sana Ebrahimi and Rishi Advani on their EDBT'25 paper, “Evaluating the Feasibility of Sampling-Based Techniques for Training Multilayer Perceptrons”.

- Congratulations to Nima Shahbazi and Mahdi Erfanian on their VLDB'24 demo paper, “FairEM360: A Suite for Responsible Entity Matching”.

- Congratulations to Nima Shahbazi on his VLDB Journal (2024) paper, “Reliability Evaluation of Individual Predictions: A Data-centric Approach”.

- Our (invited) paper Coverage-based Data-centric Approaches for Responsible and Trustworthy AI was published in Data Engineering Bulletin Vol. 48(1), March 2024.

- Congratulations to Sana Ebrahimi and Nima Shahbazi on their NAACL'24 paper, “Reliability and Equity through Aggregation in Large Language Models”.

- Congratulations to Nima Shahbazi on his (Megagon) internship project's acceptance in ICDE'24.

- Congratulations to Nima Shahbazi on his SIGMOD'24 paper, “FairHash: A Fair and Memory/Time-efficient Hashmap”.

- Nima Shahbazi will be a Research Scientist Intern at Megagon Labs in Summer 2023.

- Congratulations to Nima Shahbazi on his VLDB'23 paper, “Through the Fairness Lens: Experimental Analysis and Evaluation of Entity Matching”.

- Congratulations to Melika Mousavi and Nima Shahbazi on their EDBT'24 paper, “Data Coverage for Detecting Representation Bias in Image Data Sets: A Crowdsourcing Approach”.

- Congratulations to Rishi Advani on his KDD'23 paper, “Maximizing Neutrality in News Ordering”.

- Congratulations to Nima Shahbazi on his ACM COMPUTING SURVEYS (CSUR) paper, “A Survey on Techniques for Identifying and Resolving Representation Bias in Data”.

- Congratulations to Khanh Duy Nguyen on his SDM workshop paper, “PopSim: An Individual-level Population Simulator for Equitable Allocation of City Resources”.

- Congratulations to Ian Swift and Sana Ebrahimi on their VLDB 2022 paper, “Maximizing Fair Content Spread via Edge Suggestion in Social Networks”.

- Here are the slides and other information about our SIGMOD'22 tutorial on "Responsible Data Integration: Next-generation Challenges".

- Congratulations to Ian Swift on his ICDE 2022 paper, “Fairness-Aware Range Queries for Selecting Unbiased Data”.

- A big Thank you to Google for supporting our work on Cherry-picked Trendlines with the Research Scholar award!

- Congratulations to Nima Shahbazi on his SIGMOD 2021 paper, “Identifying Insufficient Data Coverage for Ordinal Continuous-Valued Attributes”.[paper][slides][video]

- Congratulations to Matteo Corain on his ICDE 2021 paper, “DBSCOUT: A density-based method for scalable outlier detection in very large datasets”. This paper is the outcome of his joint MS thesis with Politecnico di Torino (Italy), co-adviced by Dr. Paolo Garza.

📌 Pinned Systems and Repositories 📌

Needle🪡🔍 is a deployment-ready open-source image retrieval database with high accuracy that can handle complex queries in natural language.

It is Fast, Efficient, and Precise, outperforming state-of-the-art methods.

Born from high-end research, Needle is designed to be accessible to everyone while delivering top-notch performance.

Whether you are a researcher, developer, or an enthusiast, Needle opens up innovative ways to explore your image datasets. ✨

📖 Detailed installation instructions: Getting Started .